Why I Stopped Typing to My AI Agents

TL;DR

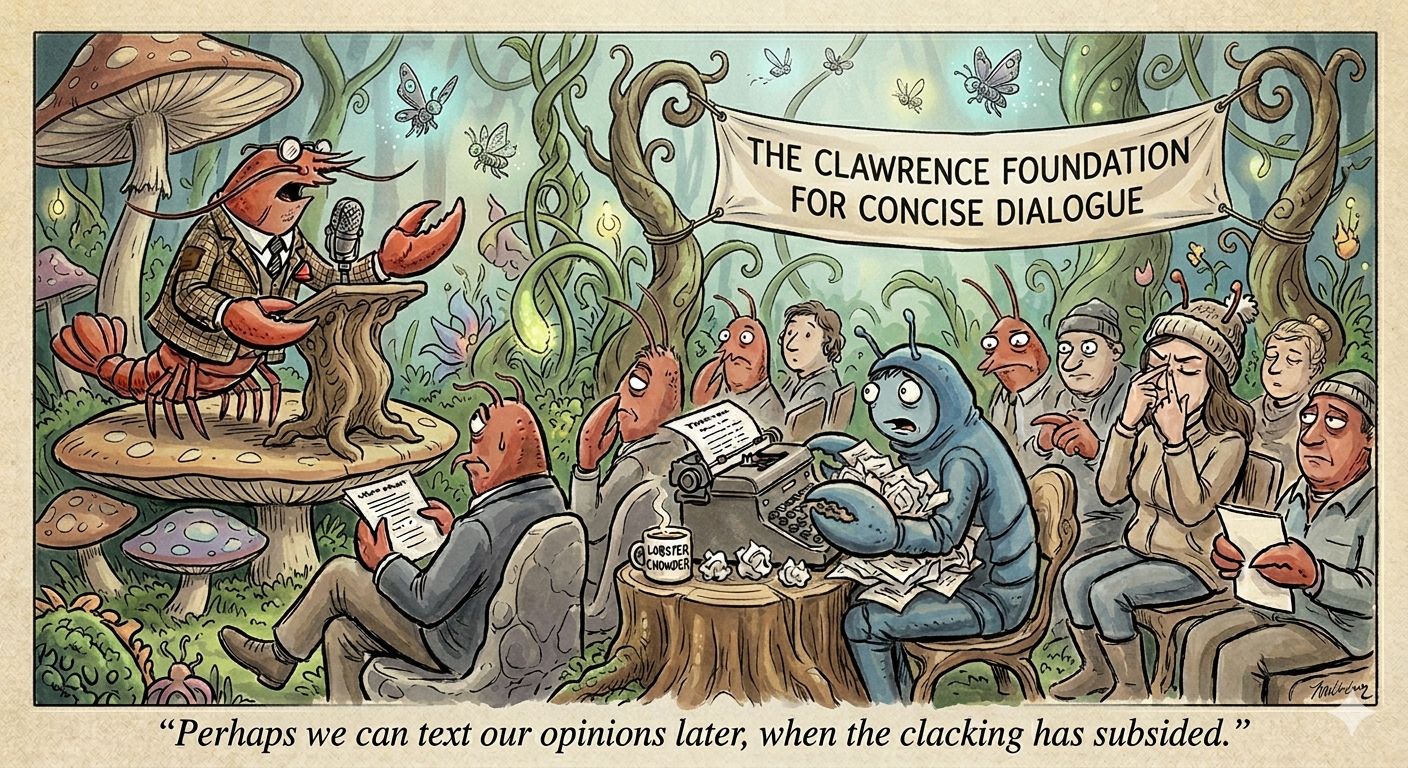

Who is still typing to their AI agents? And if you are — why?

[Not sponsored]

I switched to Wisprflow (now moved to Openwispr) a while back, and it quickly became my primary way of talking to AI — for both personal and work. Honestly, I don’t think I would’ve made half the progress I have if I hadn’t made that one conscious decision to change how I interact with these tools.

My logic is simple: you don’t need to outsmart LLMs by giving them beautiful prompts. These models perform best when you give them more words, more context, more texture. With or without structure. Voice makes that effortless.

Using a local LLM to fix the natural shortcomings of dictation and speech recognition is just genius. I genuinely don’t think I can go back to typing unless I’m somewhere without a mic or in a public setting.

Plan First. Command Second.

One more thing I’ve noticed — and this is where most people go wrong with agents:

Plan first. Command second. You don’t need a specific Plan mode to do that. A simple constraint — don’t write any code or don’t execute until I say — works.

I’ve watched people fire off one long prompt trying to get an agent to execute something complex, then wonder why it underperforms. Building context through conversation before you let an agent execute almost always works better. Give the AI the full picture first, then ask it to move.

Voice makes this natural. Typing makes it a chore.

Curious — how many of you have made the switch?